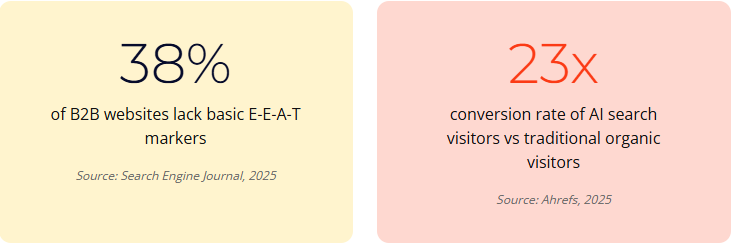

Experience, Expertise, Authoritativeness and Trustworthiness are not abstract quality signals. They are the criteria AI systems and search engines use to decide whether to cite, surface or ignore your content. 96% of AI Overview citations come from verified authoritative sources. 38% of B2B websites lack basic E-E-A-T markers. Your domain has been live for 15 years. That is not the same as being trusted.

Pull up your About page. Open your most recent article. Look at the bylines, the author bios, the publication dates, the credentials. Look at the footer. Look at the schema. Look at the way your organisation is represented across its own website.

Now ask the question nobody at the senior table wants to ask: if a stranger arrived on this site cold, would they conclude these are the people you should trust on this subject? Not “is the brand familiar”. Not “have we been around a while”. Trust. The kind that makes a parent click your treatment guide instead of TikTok. The kind that makes a donor put through the gift instead of bouncing. The kind that makes ChatGPT cite you instead of someone else.

For most regulated and mission-led organisations, the honest answer is no. Not because the expertise is missing. The expertise is the entire reason the organisation exists. The expertise is just not represented on the website in any way an algorithm or a hurried human can verify. The trust is real. The trust signals are not. And in 2026, those are the only things that get measured.

| What You Think Demonstrates Trust | What AI Systems and Search Actually Read | The Gap |

|---|---|---|

| “We have been around since 2009” | Domain age is a weak baseline signal at best | 15 years on the same domain proves longevity, not authority |

| “Everyone in our sector knows our name” | Brand search volume needs to show up in measurable data | If Google is not seeing branded queries at scale, the entity is not recognised |

| “Our content is written by experts” | Named author bylines, bios, credentials, schema | Anonymous expert content is invisible to E-E-A-T evaluators |

| “We are a registered charity / regulated firm” | Registration numbers in footer, schema, About page | If the credential is not on the page, it does not exist for the algorithm |

| “We have a great reputation” | Third-party citations, Wikipedia, industry mentions, awards | Reputation that lives only in conversations is unreadable |

Your domain has been live for 15 years. That is not the same as being trusted. Algorithms and AI systems do not read longevity as authority. They read the active signals you put on the page. If the signals are not there, the trust is not there. Whatever you assumed about your reputation translating online has not been translating for some time.

What E-E-A-T Actually Is (and What It Is Not)

E-E-A-T stands for Experience, Expertise, Authoritativeness and Trustworthiness. The first E was added in 2022, on top of the existing E-A-T framework, after Google decided that direct hands-on experience deserved its own pillar separate from professional expertise. The framework lives inside Google’s Search Quality Rater Guidelines, the document Google uses to brief the human raters who evaluate search results and feed the signal back into the ranking systems.

The four pillars are not abstract. They are operational.

- Experience: Has the author actually done this? First-hand product use, lived professional practice, real case work. The thing AI cannot fake.

- Expertise: Are they qualified? Credentials, training, professional registrations, demonstrated knowledge of the subject.

- Authoritativeness: Are they recognised by their peers? Citations, mentions in trusted publications, professional body memberships, industry standing.

- Trustworthiness: Is the organisation itself credible? Transparent ownership, accurate information, honest disclosure, secure infrastructure, regulatory compliance.

The crucial point: Trust is the umbrella. Without it, the other three do not save you. Google’s own guidelines call Trust “the most important member of the E-E-A-T family”. You can have the experience, the expertise and the authority, and still get filtered out if the site itself does not pass basic trust checks.

The Mueller Paradox (the awkward bit)

Here is the line every SEO sceptic will quote at you. Google’s John Mueller has stated, repeatedly, that E-E-A-T is not a direct ranking factor. His exact words: “It’s not something where I would say Google has an E-A-T score and it’s based on five links plus this plus that… there’s no such thing as an E-A-T score.” Technically correct. Functionally meaningless. The thing the raters reward is the thing the algorithms learn to reward. The distinction between “ranking factor” and “training signal that becomes the ranking factor” matters in a SearchEngineLand interview. It does not matter to the team that just lost 70% of their traffic.

The data is unambiguous. Google’s December 2025 core update saw 67% of YMYL sites (health, financial, civic) report negative ranking impacts. Recovery for YMYL sites takes 6 to 12 months, against 2 to 6 months for general sites. The March 2026 core update reduced traffic to mass-produced AI content by 71%, while sites using original data and named expert authors saw a 22% visibility increase. Those penalties did not arrive because of “an E-E-A-T score”. They arrived because the algorithm now rewards what the rater guidelines describe.

Read the rater guidelines once. They run to 182 pages and tell you exactly what Google is training its systems to reward. Then look at your site through that lens. The exercise is unsettling on purpose.

Why YMYL Just Got Bigger

Your Money or Your Life is the category Google applies to topics where bad information could damage someone’s health, finances, safety, or civic participation. YMYL content faces the strictest E-E-A-T evaluation. Healthcare. Charity giving. Education. Financial advice. Legal guidance. Government information. The four sectors this article was written for are all squarely inside YMYL. Most have been for years.

What changed is the scope. Google’s September 2025 Quality Rater Guidelines update broadened the YMYL definition to explicitly include government, civics and elections. AI Overviews now have their own dedicated rating framework inside the same document. As of January 2025, the guidelines also state that content where “all or almost all” main content is AI-generated, and which lacks effort, originality or added value, can receive the “Lowest” Page Quality rating. The lowest. Not “low”. The bottom rung.

If your charity publishes guidance on benefits eligibility, that is YMYL. If your professional services firm publishes commentary on tax changes, that is YMYL. If your education provider publishes anything that influences a parent’s choice of school, that is YMYL. The standard you are being evaluated against is the same standard Google applies to medical advice. Most organisations are not behaving as though they know that.

Beyond the scope expansion, the consequences sharpened. The recovery timeline gap (6-12 months for YMYL versus 2-6 months for general content) means that a YMYL site hit by a core update spends a year quietly bleeding visibility before it stabilises. That is not an SEO problem. That is a financial planning problem.

The Six Categories of E-E-A-T Signal

This is the framework we use when auditing a regulated or mission-led organisation’s content estate. Six categories. Concrete checks within each. The point of the framework is to make E-E-A-T diagnostic, not philosophical.

1. Author signals

- Named bylines on every article (no “Admin”, no “Communications Team”)

- Detailed author bios with photo, full name, credentials (MD, PhD, FCA, RGN, etc.)

- Links to LinkedIn, professional registrations, published research

- Person schema with sameAs property linking to verified profiles

- jobTitle and affiliation in schema

72% of top-ranking websites now feature detailed author biographies with verifiable credentials. The “Communications Team” byline is now a signal of weakness, not house style.

2. Page-level signals

- Visible publication date

- Visible “last reviewed” or “last updated” date with reviewer name

- Citations to named studies, not “research shows”

- Inline links to authoritative sources (NHS, NICE, government, peer-reviewed journals)

- Clear methodology or sourcing for any claims made

3. Site-level signals

- Comprehensive About page with leadership, ownership, organisational history

- Contact page with real address, telephone, named contacts (not just a form)

- Privacy policy, T&Cs, cookie policy, accessibility statement

- Regulatory or registration numbers in footer (Charity Commission, SRA, ICAEW, RICS, GMC)

- Consistent NAP (Name, Address, Phone) across the entire web presence

4. External signals

- Wikipedia page or notability sufficient to support one

- Mentions on authoritative third-party sites (sector publications, regulators, partner organisations)

- Industry awards, professional body listings, accreditation marks displayed on site

- Citations in journalism, academic research, government reports

- Named experts quoted in mainstream coverage

5. Schema and structured data

- Organization schema (or specialised: MedicalOrganization, GovernmentOrganization, EducationalOrganization)

- Person schema for every author

- Article schema with author, datePublished, dateModified

- BreadcrumbList for navigation context

- FAQPage where appropriate

JSON-LD has reached 41% adoption across the wider web, but 68% of top-ranking sites use structured data including author schema. The gap between average and top-performing is the gap between visible and invisible.

6. Sector-specific signals

- Healthcare: HONcode certification (where applicable), NHS-aligned guidance referencing, GMC-registered authors, medical reviewer bylines

- Charities: Charity Commission registration number, trustee identification, audited accounts published, GuideStar/Candid seal

- Education: Accreditation bodies (QAA, CHEA, regional accreditors), institutional affiliations, faculty credentials

- Professional services: Practising certificate numbers, Code of Ethics statements, regulatory body memberships

The full audit covers all six categories. Most organisations have gaps in three or four. Closing them is not a multi-year transformation programme. It is a quarter of focused work, rationally prioritised.

The Sector Audit: What Each Sector Gets Wrong

The pattern of E-E-A-T failure is consistent within each sector. The fix is, too.

Healthcare

The most common failure is anonymous medical content. A symptom guide or treatment overview written by “the team” or by a freelance copywriter with no medical background. Search engines and AI systems applying YMYL standards will deprioritise this content regardless of the underlying organisation’s credibility. The fix: every piece of clinical content has a named medical author and a named medical reviewer, both with credentials, both with Person schema linking to professional registration. Last-reviewed dates appear visibly on the page. Citations link to NHS, NICE, peer-reviewed journals.

Patient trust is shifting. 38% of UK and US adults aged 18-34 have disregarded their healthcare provider’s medical guidance in favour of social media (TechTarget, 2024-25). Healthcare organisations are competing not just with each other but with TikTok. The trust signals that make a clinician-authored article more credible than a 60-second video are the only thing that closes that gap.

Charities

The UK charity sector retains 57% public high-trust, level with 2024, and only doctors rank higher (Charity Commission, 2025). That trust is the sector’s biggest asset and the most exposed to E-E-A-T failure online. Common gaps: Charity Commission registration number missing from footer, trustees not visible on About page, audited accounts not published or hard to find, no Organization schema, no named experts on impact stories.

The transparency lift is real. Nonprofits with a GuideStar/Candid Seal of Transparency receive 53% more in contributions (note: long-running data, still cited). The signal works at the donor decision level and at the algorithmic level. Both audiences are looking for the same thing: proof that the organisation is what it claims to be.

Education

Educational institutions tend to assume that institutional standing speaks for itself. It does not, online. Common gaps: faculty credentials hidden in deep institutional pages rather than surfaced on content; accreditation marks present but not in schema; programme pages without named academic leads; research outputs not connected to author profiles via sameAs. The fix is largely structural: the data exists, it just needs to be surfaced and linked.

Professional services

The classic failure mode is the firm-led byline. Articles attributed to “[Firm Name] LLP” rather than to named partners or solicitors. The expertise is the entire commercial proposition, but the website hides it. Add named author bylines with practising certificate numbers, regulatory body links, professional headshots and Person schema. The expertise becomes legible to algorithms and to prospects in the same edit. Worth doing yesterday.

What the Data Says About Failure

The cost of getting E-E-A-T wrong is now measurable in lost commercial outcomes, not just lost rankings.

The Ahrefs data is the part most boards underestimate. AI search visitors convert at 23 times the rate of traditional organic. 0.5% of total Ahrefs traffic from AI platforms generated 12.1% of signups in a 30-day period. Being uncited by AI is not just an SEO problem. It is a pipeline problem. Each missing E-E-A-T signal is a small reduction in the probability of being the source AI chooses to surface, multiplied across thousands of queries that mention your sector every day.

Brand search volume now correlates with LLM citations at 0.334, more strongly than backlinks (The Digital Bloom, 2025). The trust hierarchy AI uses to decide who gets cited triangulates across roughly 30 to 50 entity signals before deciding whether an organisation is “real” enough to cite. Your About page, your Wikipedia presence, your consistent NAP, your registered numbers, your schema, your awards: each is a triangulation point. Missing five or six of them does not get rounded up. It gets you filtered out.

Why AI Makes E-E-A-T More Important, Not Less

The argument that “AI changes everything, so the old SEO rules don’t matter” is the most expensive misreading of the moment. AI systems do not stop caring about authority. They care more, because they have to decide which one source to cite when the user asked one question. Traditional Google could rank ten results and let the user choose. ChatGPT picks one or three.

Each AI engine weights signals differently. ChatGPT leans heavily on Wikipedia and structured knowledge. Perplexity pulls 46.5% of citations from Reddit. Google AI Overviews pull about 21% from Reddit. Only 11% of cited domains are cited by both ChatGPT and Perplexity. The fragmentation means you cannot optimise for one and call it done. You optimise for the consistent signal across all of them, which is verified entity authority. Person schema. Organization schema. Knowledge Graph recognition. Named experts. Real third-party mentions. The basics, executed well, are the only thing that travels across all of these engines.

This is the territory that generative engine optimisation services exists to operate in. Not chasing each engine’s quirks individually. Building the trust infrastructure that all of them read. The work is unglamorous and consistent. So is most of what generates compounding visibility.

The Counterpoint: AI Content Is Not the Enemy

One nuance the article would be incomplete without. Google does not penalise AI content for being AI content. It penalises low-effort, anonymous, undifferentiated AI content. Sites running hybrid AI/editor pipelines, with named authors adding genuine expertise, citation, and editorial judgment to AI-drafted content, have shown 12% organic click increases in case studies. The signal Google’s raters care about is whether the content displays effort, originality and value, regardless of how it was produced.

That is the distinction that matters for regulated and mission-led organisations. AI is fine as a drafting tool. AI as a substitute for named expertise is the thing that triggers the “Lowest” Page Quality rating. Use AI to produce drafts your in-house experts edit and sign their names to. Do not use AI to produce content nobody is willing to attach their name to. The signal is in the name on the byline, not the tool that generated the prose.

For more on the working model, see our piece on AI-powered content systems, and read the broader case for quality over volume in the Fuel Room. The work that wins under the current rater guidelines also wins under the next ones, because the underlying logic is consistent: trust signals decide who gets cited.

What to Do This Quarter

The mistake most organisations make is treating E-E-A-T as a project for “next year”. The cost of waiting is six months of visibility you cannot get back. The fix is not a transformation programme. It is a sequenced 90-day push that delivers measurable improvement.

Audit first. Use the framework above. Score every category from 0 (no signals present) to 5 (best practice executed). Total out of 30. Anything under 18 is at active risk in the next core update. Anything under 12 is bleeding visibility right now without anyone in the team noticing.

Then prioritise. Author bylines and bios first, because they unlock everything else. Schema second, because it is the cheapest high-impact fix on the list. Site-level trust signals third, because they protect the basics. External signals last, because they take time to earn but compound when they arrive. Foundational SEO services still matter beneath all of this; E-E-A-T sits on top of, not in place of, technical and content fundamentals.

Your Action Plan: The 30-Point E-E-A-T Audit

- Add named bylines to every piece of content. Replace “Admin”, “Communications Team” and firm-name attributions with named authors. Single highest-leverage fix.

- Build full author bio pages. Photo, full name, credentials, role, brief biography, links to LinkedIn, registration numbers. Person schema with sameAs.

- Implement Organization or sector-specific schema. MedicalOrganization, EducationalOrganization, NGO/Charity-specific schema. Include registration numbers, addresses.

- Make publication and review dates visible. Every article shows when it was published and when it was last reviewed. For YMYL, add the reviewer’s name and credentials.

- Audit your About and Contact pages. Real address, telephone, named leadership, organisational history, regulatory or registration numbers, ownership disclosure.

- Surface regulatory credentials in the footer. Charity Commission number, SRA, ICAEW, RICS, GMC, accreditation marks. Visible site-wide.

- Replace “studies show” with named sources. Every claim cites a specific study, organisation or report by name and links out.

- Audit external signals. Wikipedia presence, mentions on sector publications, regulatory body listings, awards. PR is now a search function.

- Score your YMYL content separately. Apply the highest E-E-A-T standard to anything touching health, finance, civics, education or wellbeing.

- Read the Quality Rater Guidelines once. All 182 pages. The team that has read them has a permanent advantage over the team that has not.

Frequently Asked Questions

Is E-E-A-T actually a Google ranking factor?

Officially, no. Google’s John Mueller has stated repeatedly that there is no E-E-A-T score. Functionally, the distinction is academic. Sites that fail E-E-A-T audits lost 40-71% of traffic in 2025 and March 2026 core updates. The thing rated by raters becomes the thing the algorithm rewards.

What are the most important E-E-A-T signals to fix first?

Start with named author bylines and detailed bios with credentials. 72% of top-ranking sites now have these. After that, Person and Organization schema, About and Contact pages, and regulatory/registration numbers in the footer.

Does E-E-A-T matter for AI search citation?

More, not less. 96% of AI Overview content comes from verified authoritative sources. Brand search volume correlates with LLM citation at 0.334, stronger than backlinks. AI systems triangulate authority across 30-50 entity signals.

How does E-E-A-T apply to YMYL content?

YMYL content faces the highest E-E-A-T standard. 67% of YMYL sites lost rankings in the December 2025 core update. Recovery: 6-12 months vs 2-6 months for non-YMYL. Named credentialed authors, citations, transparent identity, visible review dates are not optional.

Is our 15-year-old domain enough to demonstrate trust?

No. Domain age contributes a slightly higher baseline but is not a substitute for active trust signals. AI systems look for entity recognition, author credentials, schema markup, third-party mentions. A 2-year-old domain with these will outrank a 15-year-old domain without them.

Sources

- Google Search Quality Rater Guidelines (2025) – YMYL definition expanded to include government, civics and elections (11 September 2025 update, 182 pages)

- ALM Corp (2025) – 67% of YMYL sites reported negative ranking impacts from December 2025 core update; recovery takes 6-12 months for YMYL vs 2-6 months for general sites

- JetDigitalPro / OpenPR (2026) – Google’s March 2026 Core Update reduced traffic to mass-produced AI content by 71%; sites using original data saw 22% visibility increase (600,000 pages analysed)

- Digital Applied (2026) – 72% of top-ranking websites feature detailed author biographies with verifiable credentials

- SE Ranking (2026) – 96% of AI Overview content comes from verified authoritative sources

- The Digital Bloom (2025) – Brand search volume strongest predictor of LLM citations (0.334 correlation, stronger than backlinks); only 11% of domains cited by both ChatGPT and Perplexity

- Search Engine Journal via Hashmeta (2025) – 38% of B2B websites lack basic E-E-A-T markers

- Ahrefs via Position Digital (2025) – AI search visitors convert at 23x the rate of traditional organic; 0.5% of traffic from AI generated 12.1% of signups

- SearchEngineJournal interview with John Mueller – “There’s no such thing as an E-A-T score” (counterpoint quote)

- Stan Ventures (2025) – AI-generated content with no effort/originality can receive “Lowest” Page Quality rating (January 2025 QRG update)

- Charity Commission Public Trust in Charities (2025) – 57% of UK adults have high trust in charities; only doctors ranked higher

- GuideStar / Candid Transparency Research – Nonprofits with Seal of Transparency receive 53% more in contributions (long-running data)

- TechTarget Patient Engagement (2024-25) – 38% of UK/US adults aged 18-34 disregarded healthcare provider guidance in favour of social media

- Ofcom (2024-25) – 40% of UK adults encountered misinformation in the previous four weeks; only 26% use search engines to verify

- Moz / SEJ via Ranking Generals (2024-25) – 68% of top-ranking sites use structured data including author schema; JSON-LD adoption now 41% across the web