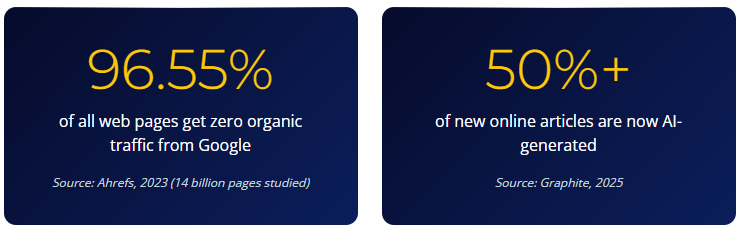

Because 96.55% of all web pages get zero traffic from Google. Because AI systems cite human-written content at nearly 6x the rate of AI-generated content. Because flooding your site with volume is a strategy in the same way that shouting in a library is a communication plan. Here is why your board is wrong about this one.

Someone on your board saw a demo. Or read an article in the FT. Or had a conversation at a dinner party with someone who had a conversation with someone who works in AI. The result is always the same email, worded slightly differently each time: “If AI can write content now, why aren’t we producing more of it?”

It is a reasonable question. On the surface. AI writing tools have reduced the cost of content production to approximately nothing. The time to publish a 1,500-word article has gone from two weeks to twenty minutes. The logical conclusion, if you have never actually worked in content strategy, is that more is better. If the bottleneck was always production, and production is now free, the answer must be volume.

It is not. The answer has never been volume. And the organisations currently flooding their sites with AI-generated content at scale are learning this the expensive way.

| The Volume Argument | The Reality | What Actually Happened |

|---|---|---|

| “More content means more keywords covered” | 96.55% of web pages get zero Google traffic | You published 200 articles. Google ranked 7 of them. |

| “AI makes content free to produce” | Over 50% of new articles are now AI-generated | You are competing with everyone else who had the same idea. |

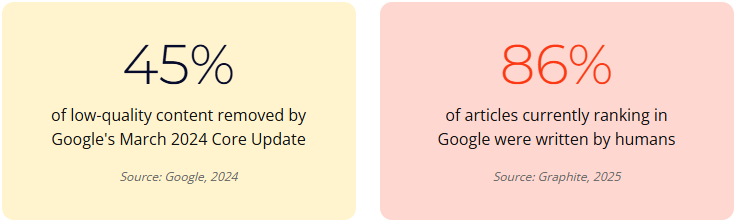

| “We can outpublish the competition” | 86% of ranking articles are still human-written | Your competitors published 8 good pieces. They outrank your 200. |

| “Volume builds authority” | Google’s March 2024 update removed 45% of low-quality content | Volume built a penalty risk, not authority. |

| “The board wants to see output” | Only 36% of marketers can measure content ROI | The board is measuring production. Nobody is measuring performance. |

If 200 mediocre articles were the answer, content farms would be Fortune 500 companies. They are not. Most of them were wiped out by algorithm updates years ago. The ones publishing AI content at scale today are the content farms of 2026. The ending will be the same.

The Board Meeting That Started This

We know how this conversation goes because we have sat in it more times than we can count. The CEO saw a LinkedIn post about a company that “10x’d their content output with AI”. The CFO wants to know why the content budget is not going down now that robots can write. The head of marketing is nodding cautiously, because saying “that is not how content works” in a board meeting is career-limiting honesty.

So nobody says it. Instead, the team gets a new KPI: publish more. The content calendar, which was already a spreadsheet pretending to be a strategy, now has 200 cells where it used to have 20. The AI tools get activated. The articles start appearing. The publishing schedule is full. The head of marketing takes a screenshot of the production numbers for the monthly report. Someone puts a green arrow next to “content output: up 900%”.

That green arrow is not a performance metric. It is a production metric. Those are different things.

Ninety-six point five five per cent. That is not a rounding error. That is the vast majority of everything ever published on the internet receiving precisely zero visits from search. And that was before AI tools made it possible for every organisation on the planet to publish at volume. The denominator just got dramatically larger. Your 200 articles are competing with everyone else’s 200 articles for the same finite attention.

What Google Actually Did (and What It Tells You)

In March 2024, Google released a core update specifically targeting low-quality, unoriginal content. The stated goal was to reduce unhelpful content in search results by 40%. The actual result exceeded that: 45% of low-quality content was removed from search results. Entire sites were deindexed. The ones that disappeared had a common profile: high volume, low originality, thin depth.

Google’s official position on AI content is nuanced but instructive: “Our focus is on the quality of content, rather than how content is produced.” They do not penalise AI content for being AI content. They penalise it for being bad. And most of it is bad. Because most of it was produced without strategy, without expertise, without original data, and without any editorial judgment about whether it was worth publishing in the first place.

That second number should stop everyone who is arguing for volume. Over half the content being published is now AI-generated. But 86% of the content that actually ranks is human-written. The maths is brutal. AI is producing most of the content and earning almost none of the visibility. The 14% of AI content that does rank has something the other 86% does not: human strategy, editing and expertise behind it.

AI is producing the majority of new content on the internet. It is earning a minority of the rankings. That is not an adoption problem. That is a quality problem. And it is the quality problem your board is about to create if they get their 200 articles a month.

The Sites That Tried It (and What Happened Next)

The data on this is now clear enough to be uncomfortable. Sites that published 50 to 100 quality AI articles with human editing saw traffic increases of 30 to 80%. Sites that published 1,000 or more unedited AI articles saw traffic drops of 40 to 90%. The difference was not the tool. The difference was whether anyone with expertise was involved in the process.

That is not a subtle distinction. That is the difference between growth and destruction. And the timeline is short. The sites that flooded with volume did not decline slowly. They declined within months. Because the algorithm updates are now specifically designed to detect and demote exactly this pattern: sudden volume increase, thin content, no editorial differentiation.

| Approach | Volume | Result |

|---|---|---|

| Quality AI content with human editing | 50-100 articles | Traffic increase of 30-80% |

| Unedited AI content at scale | 1,000+ articles | Traffic decline of 40-90% |

| The difference | Human expertise in the process | The gap between growth and penalty |

There is no ambiguity in those numbers. The strategy your board is proposing has been tried. The results are in. It does not work. Not because AI is bad, but because volume without quality has never worked, and the systems designed to detect it are now better than ever at doing exactly that.

Why AI Search Makes This Worse, Not Better

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Ut elit tellus, luctus nec ullamcorper mattis, pulvinar dapibus leo.

Here is where the argument for volume falls apart completely. The shift towards AI-powered search, where ChatGPT, Gemini and Perplexity provide direct answers with cited sources, does not reward volume. It rewards authority, originality and depth. The content that gets cited by AI systems is not the content that was easiest to produce. It is the content that was hardest to replicate.

82% of articles cited by ChatGPT and Perplexity were written by humans. Only 18% were AI-generated (Graphite, 2025). Adding statistics to content increases AI visibility by 22%. Adding direct quotations boosts it by 37% (The Digital Bloom, 2025). Only 11% of domains are cited by both ChatGPT and Perplexity. AI citation is not just selective. It is ruthlessly selective.

Your 200 AI-generated articles are not going to get cited by ChatGPT. They are going to train ChatGPT. There is a meaningful difference between contributing to AI’s knowledge base and being cited by it. One builds your authority. The other builds everyone else’s. And the organisations that understand this are investing in the kind of content AI systems want to cite: original research, named data, expert perspective, and clear methodology. Not volume. Not ever volume.

This is where generative engine optimisation becomes a genuine strategic advantage, not a buzzword. The brands being cited in AI search results are the ones producing content that AI cannot generate on its own. Proprietary data. First-hand case studies. Expert opinion with named attribution. Your 200 articles contain none of these things.

AI can summarise everything that has already been said. It cannot say anything new. That is your opening. And you are about to close it by publishing 200 pieces of content that say exactly what everything else already says.

The Trust Problem Nobody Mentions in the Board Meeting

52% of consumers report reduced engagement with content they believe is AI-generated (ArtSmart, 2024). 77% want to know if content was created by AI (Baringa, 2025). And there is a 39-point trust gap between how favourably marketers view AI (77%) and how favourably consumers view it (38%). The people producing AI content at scale love it. The people reading it do not.

This matters more in some sectors than others. In healthcare, where your content might influence treatment decisions, the trust implications are regulatory, not just reputational. In professional services, where clients are buying expertise, the signal of AI-generated content is “we do not have enough expertise to write our own thought leadership”. In the charity sector, where donor trust is the entire business model, flooding your site with generic AI content erodes the credibility that drives donations.

There is a 39-point gap between how marketers feel about AI content and how their audiences feel about it. Marketers see efficiency. Audiences see laziness. Your board is on one side of that gap. Your customers are on the other. Guess which side matters more.

“Slop” was named Word of the Year in 2025 by Macquarie Dictionary, Merriam-Webster and the American Dialect Society. That is not an obscure industry term. That is mainstream recognition that the public has noticed the flood and is not impressed. Your brand does not want to be associated with a word that describes the thing people are actively learning to ignore.

29.3% of marketing professionals say AI-generated content flooding channels is their top industry concern. Not a side concern. Their top concern. The people who work in content marketing are worried about the thing your board just asked them to do more of.

If 200 mediocre articles were the answer, Wikipedia would have solved marketing in 2006. Volume is not strategy. It never was.

What Ashley Salek Tells Clients in the Room

We get this question in client meetings more often than you might expect. Someone has seen a demo, or read an article, or the CFO has asked why the content budget is what it is. And the question always sounds reasonable on the surface: why aren’t we just producing more?

My answer is always the same. We can produce more. We could produce 200 pieces next month if that is what you wanted. But more is not the same as better. And in a world where AI can generate unlimited content, the only thing that actually differentiates you is the content that AI cannot generate: your expertise, your data, your opinions, your experience. The moment you abandon that in favour of volume, you become indistinguishable from every other brand doing the same thing. Which is most of them.

The organisations we see winning are not the ones publishing the most. They are the ones publishing the most useful, specific, credible content in their space. That is a very different brief.

Ashley Salek, Agency Director, Seventh Element

The Quality vs Quantity Diagnostic

This is the framework we use when a client asks us “should we produce more content?” It is designed to be shared internally when the volume conversation surfaces at board level. Before you answer the question, run the diagnostic.

Step 1: Audit what you already have

Pull your last 50 published articles. Check the analytics. How many have received any organic traffic in the last 90 days? How many have generated a lead, a conversion or a measurable action? How many appear in AI search citations?

For most organisations, the answer is sobering. Fewer than 10% of published articles drive meaningful results. The other 90% exist. That is all they do. They exist on your site, receiving no traffic, generating no leads, building no authority. Publishing 200 more articles that perform like the bottom 90% does not solve the problem. It multiplies it.

Step 2: Map content to commercial outcomes

Every piece of content should connect to a specific commercial objective: a keyword opportunity, a buying-stage gap, a question your target audience is asking that you are not currently answering. If you cannot articulate the commercial purpose of a piece before publishing it, you are filling a calendar, not building a strategy.

| Content With Strategy | Content Without Strategy |

|---|---|

| Briefed against a specific search intent or audience need | Topic picked from a brainstorm or trend list |

| Contains original data, expert quotes, or proprietary insight | Summarises what is already on the first page of Google |

| Measured by engagement depth, citation rate, pipeline attribution | Measured by “did we publish it on time” |

| Updated quarterly based on performance data | Published once and forgotten |

| Part of a topic cluster with internal linking architecture | Standalone article with no connection to anything else |

Step 3: Score your existing content estate

For every piece in your library, score it on three dimensions:

- Depth: Does it contain information, data or perspective that a reader cannot get from the top 5 Google results? If not, it is commodity content.

- Expertise: Does it demonstrate genuine experience in the subject? Named authors, specific examples, original research. If it could have been written by anyone with access to ChatGPT, it is not differentiated.

- Performance: Is it earning traffic, citations, engagement or conversions? Content that scores zero across all four should be consolidated, improved or removed. Not supplemented with 200 more articles.

Before producing more content, understand what your existing content is actually doing. If fewer than 10% of your articles drive results, the answer is not “publish more”. The answer is “fix the 90% that is doing nothing”. Or delete it. Deleting underperforming content has been shown to improve site-wide authority.

The Human-AI Editorial Model That Actually Works

This is not an argument against using AI. AI is excellent infrastructure for content production. It can draft, structure, research at speed, repurpose across formats. AI content with human strategic oversight performs 4.1x better than fully automated output. The tool is not the problem. The absence of human judgment is.

Here is what a working model looks like:

- Strategy layer (human): What to create, for whom, why, and what success looks like. AI cannot do this because it requires commercial context, audience insight and editorial judgment that comes from actually knowing your market.

- Research layer (AI + human): AI gathers data, identifies gaps, surfaces relevant sources. A human verifies, contextualises and adds insight from experience. AI can find the statistic. Only a human knows which statistic matters.

- Production layer (AI + human): AI drafts structure and initial content. A human adds expertise, opinion, voice and the things a reader cannot get elsewhere. This is where 200 articles become 20 good ones.

- Quality layer (human): Fact-checking, editorial review, E-E-A-T signals. Named authors. Specific expertise. The elements that determine whether search and AI systems treat your content as authoritative or disposable.

- Distribution layer (AI + human): AI repurposes for channels. Human decides placement, timing and audience targeting. The content that earns visibility is also the content that earns AI-powered content systems working in its favour.

Content pieces with over 2 minutes time-on-page average 3x more conversions. Long-form blog posts generate 9x more leads than short-form posts. Content marketing generates $3 for every $1 invested, compared to $1.80 for paid advertising. The ROI is there. But it is in depth, not volume.

What to Say When the Board Asks

The next time the question surfaces, here is the answer. Not a defensive answer. A strategic one.

- “We can produce 200 articles. Most of them will get zero traffic.” 96.55% of web pages receive no Google traffic. Publishing more content into a landscape where the vast majority of content is invisible does not change the odds. It confirms them.

- “Google is actively removing the kind of content we would produce at that volume.” The March 2024 update removed 45% of low-quality content. Sites that scaled AI content without editorial oversight saw traffic drops of 40-90%.

- “AI search systems cite human-written content 6x more than AI content.” 82% of AI citations go to human-written content. Producing AI content at scale makes us less visible in the search environments that are growing fastest.

- “Our audiences do not trust AI content.” 52% of consumers reduce engagement with AI-generated content. 77% want to know if content was AI-created. The trust cost of volume is real and measurable.

- “The ROI is in depth, not volume.” Long-form generates 9x more leads. Content with 2+ minutes time-on-page converts 3x better. The organisations winning are not publishing the most. They are publishing the most useful.

Then hand them this article. Browse the Fuel Room for more on building content that actually earns its place in search and AI citation. And read our guide to SEO services that prioritise depth over volume.

Your Action Plan: The Quality vs Quantity Diagnostic

- Audit your existing content estate. Pull your last 50 articles. Check how many received organic traffic, generated leads, or appeared in AI citations in the last 90 days.

- Score every piece on depth, expertise and performance. Content that scores zero on all three should be consolidated, rewritten or removed.

- Map content to commercial outcomes. Every article needs a specific brief: target keyword, audience segment, buying stage, and success metric.

- Implement a human-AI editorial model. AI drafts and structures. Humans add strategy, expertise, original data and editorial judgment.

- Measure performance, not production. Replace “articles published per month” with engagement depth, citation rate, time-on-page, and pipeline attribution.

- Share this article with your board. Print the table. Show the data. Move the conversation from “how much can we produce?” to “how much is worth producing?”

Frequently Asked Questions

Should we use AI for content at all?

Yes. AI is excellent infrastructure for content production: drafting, structuring, researching at speed. The problem is using AI as a replacement for strategy, expertise and editorial judgment. AI content with human strategic oversight performs 4.1x better than fully automated output. Use AI as a very fast typist. Do not use it as your content strategist.

How much content should we actually publish per month?

There is no universal number. For most B2B organisations, that is 4 to 12 pieces per month, not 200. The question is not “how much can we produce?” but “how much can we produce that is worth reading?”

Does Google penalise AI-generated content?

Google does not penalise content for being AI-generated. It penalises content for being low-quality, unhelpful and unoriginal. The March 2024 Core Update removed 45% of low-quality content. 86% of articles currently ranking were written by humans. The tool is not the problem. The quality is.

How do we know if our content quality is good enough?

Ask three questions. Would someone in your target audience bookmark this? Does this contain something they cannot get from the first page of Google? Could a competitor have written this by changing the logo? If the answer to the third is yes, your content is not differentiated enough.

What does a quality-over-quantity content strategy look like in practice?

It starts with an editorial strategy tied to commercial objectives. Each piece is briefed against a specific audience need. AI assists with research, drafting and structure. Humans add expertise, opinion, original data and editorial judgment. Every piece is measured against engagement depth, citation rate and pipeline attribution.

Turn your expertise into content AI wants to cite.

Free content quality audit. Find out what is earning visibility and what is training your competitors.

Sources

- Ahrefs (2023) – 96.55% of web pages get zero organic traffic from Google (14 billion pages studied)

- Graphite / Futurism (2025) – Over 50% of new articles are AI-generated; 86% of ranking articles are human-written; 82% of AI-cited articles are human-written

- Google (2024) – March 2024 Core Update removed 45% of low-quality content from search results

- Snezzi (2025) – Sites with 50-100 quality AI articles saw 30-80% traffic gains; sites with 1,000+ unedited articles saw 40-90% traffic drops

- The Digital Bloom (2025) – Statistics increase AI visibility by 22%; quotations by 37%; only 11% of domains cited by both ChatGPT and Perplexity

- ArtSmart / SmythOS (2024) – 52% of consumers reduce engagement with AI-generated content; 39-point trust gap between marketers and consumers on AI

- Baringa (2025) – 77% of consumers want to know if content was created by AI

- Revenue Zen / Genesys Growth (2025) – Content marketing generates $3 per $1 invested; long-form generates 9x more leads; only 36% can measure content ROI

- Siege Media (2025) – 97% of content marketers plan to use AI for content in 2026

- Pangram Labs / NewsCatcher (2024) – 60,000 AI-generated news articles published daily